10 Common Data Integration Mistakes

(Featured image credit: Oracle)

Why integrate data?

Among the major responsibilities of IT management, ensuring that the business has access to reliable information is, probably, the most critical. This is actually a very challenging goal for many companies, because the data needed to support business decision-making is often inconsistent, redundant, and of poor quality.

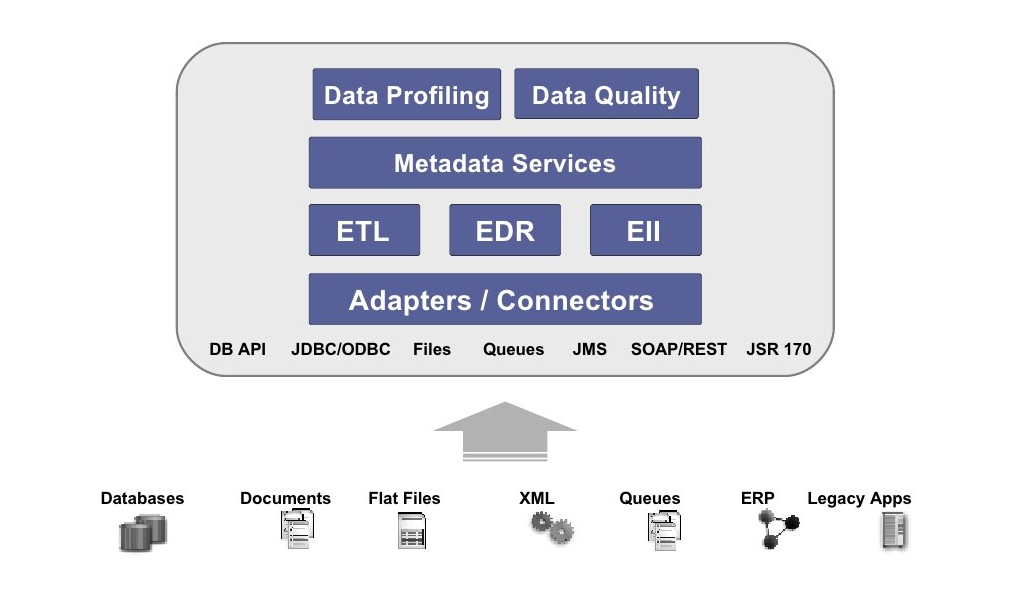

Data integration is required at the stage of creating a corporate-wide environment of trusted information. This process requires a well-thought-out architectural approach that will provide information about the business as a service to everyone who needs it. This architectural approach will typically require technology for ETL (extract, transform, and load), quality management, the creation of a metadata layer, and a strategy for master data management (MDM).

Multiple technologies involved in enterprise data integration (image credit)

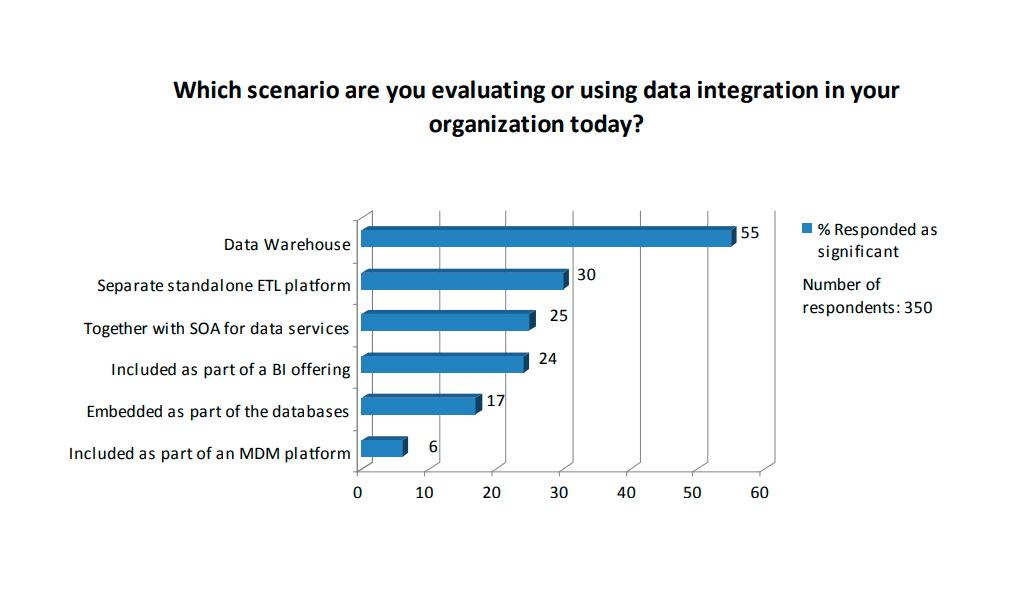

Multiple technologies involved in enterprise data integration (image credit)Companies can often identify why data integration is required, but then fall short on implementing the technology in a way that maximizes the benefit to the business. It can be very challenging for companies to manage the data integration process successfully.

Aiming for a strategic approach

Marcia Kaufman, a software industry analyst, haы gathered 10 common mistakes that should be avoided when planning for data integration.

- Following a “fire-drill” approach to data integration. It is shortsighted to use ETL technology as a tool to solve a one-time data integration problem rather than using this technology as part of a comprehensive approach to information management.

- Not thinking about data as a shared and reusable resource. It is easier to budget based on getting a single task done. However, it is much more efficient and cost-effective to be able to reuse data resources once the second, third, and future projects are initiated.

- Thinking tactically about data integration and missing out on opportunities to improve business process. Companies often implement data integration technology to eliminate time-consuming and labor-intensive processes that have been required to gain a consolidated view across business units. However, it is a mistake to focus on reducing head count and saving time in the data integration process, without also considering a broader strategic view toward improving overall business processes.

- Not establishing an architectural framework with the capability of providing reusable information services. Once the data is decoupled from the business application, you need to develop a methodology that supports reuse so the data can be shared in different ways as needed. The information as a service approach is designed to ensure that business services are able to consume and deliver the data they need in a trusted, controlled, consistent, and flexible way across the enterprise.

- Using software code to adjust for differences in definitions about customers, products, and other data types on a one-off project basis. In order to deliver information as a service, there needs to be repeatable way to manage complex processes without the expense and time required for recoding. This can be accomplished with the support of a metadata infrastructure.

- Integrating data without placing a high priority on data quality. It is critically important that companies establish processes to cleanse and correct data as part of the overall data integration process. Creating standardized and consistent information will ensure that business users are more confident about business information and in a better position to grow the business and remain competitive.

- Not creating a standardized way to handle data that is common to the various disparate IT systems and business groups. Companies need to understand the commonalities across different data types. This can best be achieved by developing a master data management (MDM) strategy to serve as the system of record for the consuming systems and applications.

- The technical integration team and the business experts do not communicate effectively. There needs to be a shared and common language describing business processes to enhance communication between business and IT management. The business is more likely to have good quality information they can count on if the IT and the business establish an efficient process for sharing knowledge and requirements.

- Business owners are reluctant to give up ownership of data. In order to gain the efficiency and accuracy in the data integration process, it is important to establish a consensus among the various data owners regarding data terminology and definitions, and there needs to be a clear understanding of the data lineage and who is responsible for these data over time. This often requires a significant cultural change because individual business experts often have a long history of managing data for their line of business or department as if it was a stand-alone entity. Companies need to find a way to balance the need for individual business experts to maintain control over their own data with the need for centralized management of data within a metadata environment.

- Trying to do too much in one project. When data is integrated across departmental data silos, previously inaccessible data becomes available to business users. Companies can take on projects that would have been impossible before because of the enormous amounts of hand-coding and manual data collection that would have been required. However, these benefits can be lost if companies try to tackle too much at once. Enterprise-wide information management projects must be approached in an incremental way so that there is time to evaluate and improve data quality, understand the needs of the business, and establish repeatable methodologies and processes.

So, as you can see, to implement a mature information strategy, one should clearly understand the consequences of these issues and consider data integration as an ongoing process—with all the parties involved—focusing on data quality and business needs.

Further reading

- Gartner Names Five Approaches to Successful Data Integration

- Metadata and Data Virtualization Explained

- The Dos and Don’ts of Data Integration